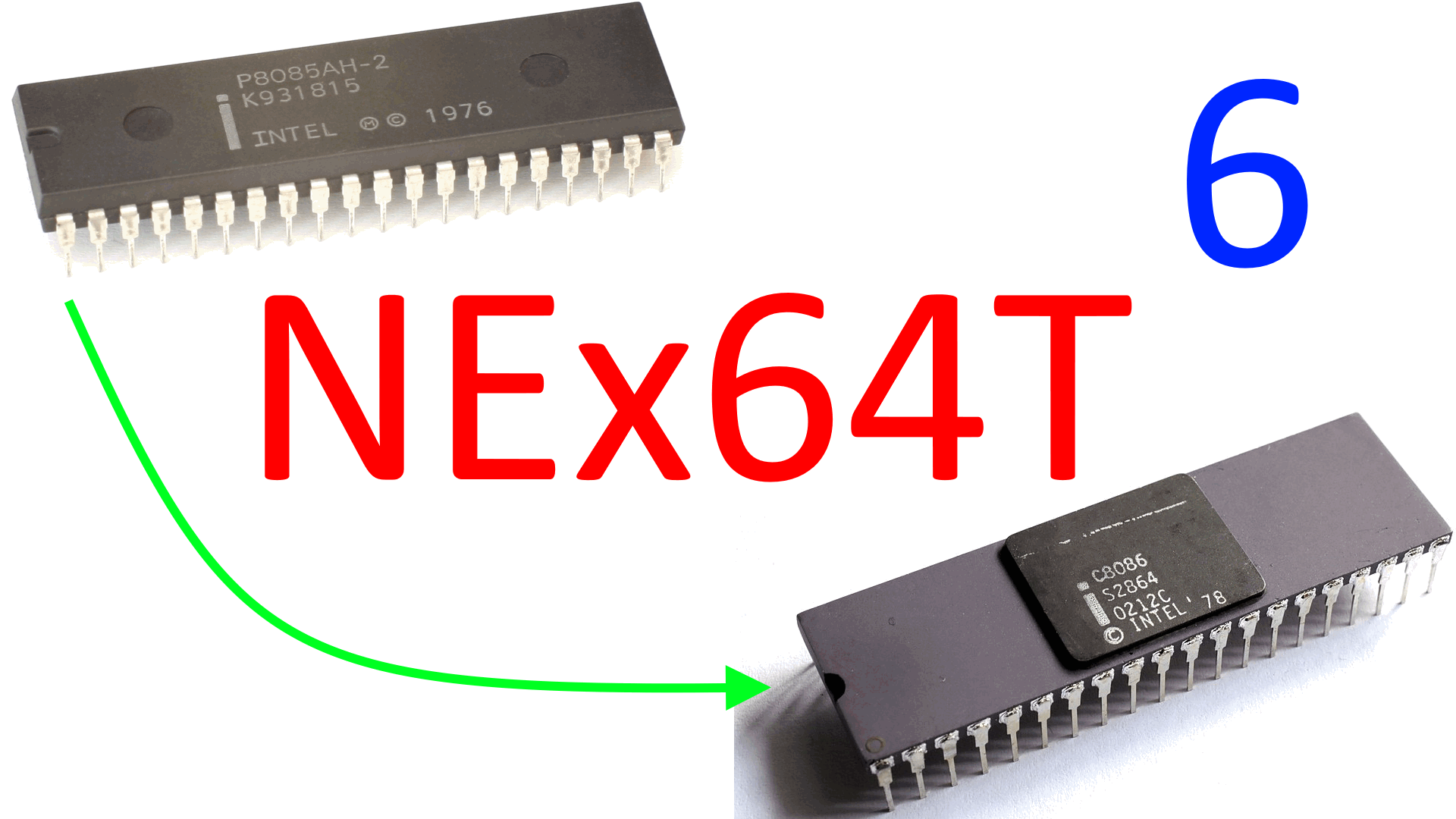

Legacy is an inescapable concept when it comes to x86 and x64 architectures, so after mentioning it again in the previous article, the time has come to “get it out of the way”, as they say, by seeing how NEx64T has managed to “manage” it despite the fact that it is a completely different architecture in terms of opcodes structure.

In fact, the biggest problems that this new architecture has had to face have been those related to the precise constraint of remaining totally compatible at assembly code level with the two that it is intended to replace. This also means taking on their burdens, which have been well illustrated in a previous, old series of articles published on this site (in Italian only).

All the old stuff away!

It is important to point out that NEx64T does not really implement everything that is currently supported by x86 (x64 is newer and has already removed some stuff, though not much), by which we mean in particular the 16-bit modes (real, protected, and virtual 8086).

Theoretically, it could also do so, and all that would be needed would be to appropriately generate the instructions that map from x86 / 16-bit to NEx64T (32 or 64-bit, it doesn’t matter). In most cases this mapping is 1:1, because an 8086/80186/80286 instruction is matched by a similar one from the new ISA, but in others more is needed (e.g. to “cut” the generated addresses from 32/64 to the 20 bits used in these modes. Or to reduce the results of certain calculations from 32/64 to 16 bits. Or even when using the “high” registers, which we will talk more about later).

The decision not to proceed in this direction was based on the fact that for decades now, almost all x86 / x64 operating systems and applications have been running in 32-bit or 64-bit protected mode (and with memory paging always active, by the way), so it makes no objective sense to waste time and resources supporting stuff that will almost certainly never be used.

The focus of NEx64T is, therefore, exclusively on 32- and 64-bit protected modes, cutting out everything from x86 and x64 that does not fall into this area. Something that Intel also decided to do (after much longer!) when it proposed the new X86-S architecture (in Italian only). Going even further, since it does not even support 32-bit OSes, but only 64-bit: only 32-bit applications remain (the only 32-bit code to run on the 64-bit OS!).

Goodbye prefixes!

We have already talked a lot about this in the introductory article of the series, but the other big piece of legacy that has been completely removed concerns precisely the vituperative prefixes, which in x86 and x64 are used to alter the operation of instructions by enabling certain features (mentioned in the first article).

In particular, those that allow the size of the data to be manipulated to be changed are no longer needed (several x86 / x64 instructions only work, by default, with a certain size), since this information is already directly integrated in a special field of the instruction (as already explained in the second article).

The prefix allowing, on the other hand, to truncate an address from 64 to 32 bits, or from 32 to 16 bits, has not been implemented. This is a rarity (even though it is the basis of the x32 ABI. A good experiment which, however, did not take root and is no longer supported), as it has not been found in the code disassembled so far.

The decision taken is simply to emulate it (should an occurrence arise), using an instruction to calculate the address, then copying it to a register of the appropriate size, and finally using this to reference the memory location in the instruction that should employ this particular functionality. It is, therefore, a matter of using an extra instruction, but given its extreme rarity, it is a cost that can well be borne.

The segmentation

Segmentation, on the other hand, cannot be completely dispensed with, as already mentioned in the previous article, since it is used to implement the TLS (Thread-local storage) mechanism.

The most common use is handled much more efficiently and quickly, as already illustrated, but the more general case requires specific precautions. In particular, what is needed is to be able to specify the segment (selector, in protected mode) to be used to access the TLS base address when we are in the presence of an addressing mode other than absolute.

In this case, NEx64T solves the problem by using larger opcodes, which are able to extend the behaviour of the instructions contained in them, making available a whole series of functionalities (set out in a previous article) that can be enabled (individually and independently of the others), including the use of the segment for TLS, precisely.

So the price to be paid remains exclusively in the area of instruction length, which in any case does not affect code density, as these scenarios are extremely rare (moreover, even x86/x64 instructions can be quite long in cases where TLS is needed).

The legacy of segmentation does not stop there, unfortunately, because this mechanism makes use of several registers, both “external” (directly accessible with special instructions) and “internal” (which store information read by the special descriptors to which the segment registers point), and which NEx64T must necessarily support (at least at the level of “storage“: memorising of this data).

There is also a varied set of instructions pertaining exclusively to segmentation, but in this case almost all of them are not implemented, but are emulated with the more general mechanism that will be described later (which is used to implement and support all legacy instructions that have been completely removed from the ISA).

In this way, the processor cores are kept leaner, having to support only a minimal set of very simple instructions that are only used to emulate just enough to make the segmentation work, which benefits the lower silicon consumption and also the lower complexity of the backend.

LOCK: read-modify-write instructions

The so-called RMW (read-modify-write) instructions are a cornerstone in handling scenarios in which the processor must perform atomic operations in systems in which access to certain resources is contested (with other peripherals, with other processors, or even between cores of the same processor).

x86 and x64 are well known for using a special prefix, called LOCK, which signals the start of a cycle of operations on the bus that block access until they are completed, so as to ensure that there can be no race conditions problems regarding access to memory locations that are disputed between all actors.

In this case for NEx64T, I have opted for the use of a few, specific, opcodes, which are not necessarily larger than all the normal ones (the previously described “feature extension” mechanism is not used).

The idea concerns the grouping of all these instructions in special opcodes, so that it is possible to determine in an extremely simple and efficient manner that they are RMW instructions (just by examining a few bits of the opcode), without resorting to prefixes or tables indicating which instructions should block the use of the bus.

This was also possible because the number of x86/x64 instructions for which it is possible to use the LOCK prefix is extremely small, so it was easy to group them (depending on the number and type of arguments) into precise opcode subsets that are easily checked by the decoders.

REP: repetition of “string” instructions

The so-called “string” instructions (LODS, STOS, MOVS, CMPS, SCAS, INS, OUTS) have been the object of derision for a very long time, as they were considered too complicated to implement, very slow, and had the not inconsiderable property of blocking the processor pipeline during their execution.

Yet they have also been widely used because they allow certain fairly common operations (STOS / filling memory areas with a certain value; MOVS / copying memory blocks) to be performed with a single instruction (to the advantage of the well-known code density).

Interestingly, starting with the Nehalem family, Intel has paid attention to the acceleration of some of these instructions (the most common and useful ones, of course), enhancing them enormously and making them rediscovered in a positive way.

For these reasons, NEx64T could not neglect them, but got their prefixes (REP/REPZ and REPNZ) completely out of the way, making them compact instructions (not even prefixes are needed to change the size of the operands) and then grouping them all in a single opcode block (so as to facilitate decoding).

The four “high” registers

x86 and x64 allow the use of four so-called “high” registers: AH, BH, CH and DH. This is a legacy of 8086 that was very useful in being able to use as many as eight 8-bit registers (along with AL, BL, CL and DL) when the number of registers available was very low (we are talking about the late 1970s!).

In reality, these are not actual registers, but rather the possibility is given to directly access (i.e. without extraction and/or insertion operations) the “high” byte (bits 15-8) of one of the four (16-bit) data registers of the 8086 (AX, BX, CX, DX).

Supporting this anachronistic functionality would have entailed a lot of contortions and wasted precious opcodes bits in an architecture, that of NEx64T, which allows 16 or even as many as 32 registers to be used without any limitation.

So at a certain point I decided to cut this umbilical cord completely, deleting the concept of “high” registers from this new ISA, and recovering the corresponding space in the opcodes. The problem of their support, however, remained, since (as has already been said several times) NEx64T must be 100% compatible at source code level with x86 and x64.

The solution found lies in a couple of simple instructions that allow the contents of the “high” byte to be copied from one of the aforementioned four registers to another prearranged register (R30), and vice versa. In this way, normal instructions can operate on the extracted byte (or bytes, in the case of instructions that both work on two “high” registers; using the R31 register as an additional “backing” for the second “high” byte extracted).

The price to be paid, of course, is that of having to execute several instructions to first extract the “high” byte(s) and then to eventually copy the result into the target “high” byte. This ranges from one (extracting the “high” byte used data source) to a maximum of four (extracting the two “high” bytes and finally writing the result into one of them) instructions that must be executed.

This is a somewhat painful choice (considering that, although these are obsolete functionalities, they are still present and used in less than 1% of the code), but one that did not have much impact either on code density or on performance (number of instructions executed), while at the same time allowing us to get more useless old junk out of the way (considering the number of registers normally available).

Millicode for the old instructions

About a hundred particularly old instructions were taken out of the way by resorting to so-called millicode, as already mentioned, and not affecting the size of the cores (since they do not exist and do not have to be implemented) except to a very small extent (implementing only the mechanism behind millicode, precisely).

Thus, instructions for manipulating BCD numbers (AAA, AAD, DAA, …), almost all those relating to segments & descriptors (MOV/PUSH/POP from/to segments, LAR/LSL, … ), those for “far” calls (CALL/JMP/RET "FAR"), for interrupts (INT, IRET, …), for I/O (IN, OUT. There are only a couple of simple ones left to handle these cases), and some very complicated ones (PUSHA, POPA, ENTER, …).

Actually they are still there (considering that NEx64T must be totally compatible at assembly code level, as we have already said many times) and can be executed, but their execution is left to special millicode code that takes care of emulating their operation using normal instructions.

This required a particularly ductile and functional solution (the details of which are not presented here), as such instructions are quite varied in terms of arguments (they may have no arguments, have immediate values, or refer to memory locations).

The important thing in the end is that the result is achieved. Moreover, this mechanism is flexible enough to allow further instructions to be implemented (there is still plenty of room) without weighing down silicon in any way.

Of course, the execution cannot be as fast as for an instruction implemented in hardware, but considering that this is extremely old stuff and used little or not at all, it is a sacrifice that can certainly be made.

The x87 FPU

Although it is one of the oldest and most despised components of the x86 architecture (with x64 it has been deprecated, but not removed), the x87 FPU is also present in NEx64T, always and exclusively as a matter of total backward compatibility.

Emulating its operation with the aforementioned millicode mechanism would have been a serious possibility, and not only theoretically: “intercepting” its opcodes/instructions and “redirecting” their execution in appropriate subprograms is trivial to say the least.

But it is not yet clear how much important software is still dependent on it and, above all, how decisive the performance factor is. Indeed, and while emulating a very old instruction such as AAA, for example, is fairly easy and relatively fast, the same cannot be said of x87 code.

This is because extremely rare instructions such as AAA can be found very occasionally as single occurrences scattered among millions or even billions of other instructions executed. So executing a “millicode version” is effectively completely negligible on the overall count.

Those of the old FPU, on the other hand, can be part of entire application blocks with critical parts executed within cycles and, therefore, put there to grind out numbers. Good performance, in this case, is absolutely crucial.

Which means that you cannot rely entirely on millicode for their execution unless you are fairly sure (benchmark in hand) that you can run all that stuff at least decently.

For these reasons, the preference has been to fully support almost the entire x87 instruction set in hardware, with the exception of museum stuff such as those that read or write BCD values (FBLD, FBSTP. emulated with millicode).

There is, however, one concrete difference: NEx64T does not support the prefix WAIT (or FWAIT. 9B in hexadecimal), which with x87 is, instead, used to check whether or not the previous FPU instruction has generated an exception (before proceeding with the execution of the next one).

In general, almost all FPU instructions already perform this check, except for a few “control” instructions (FNINIT, FNSTENV, etc.). In this case, it was sufficient to duplicate them and provide a version with and without built-in WAIT/FWAIT functionality, eliminating the prefix altogether.

MMX/SSE extensions

Although it was “only” introduced in 1997, the MMX extension (which reuses the registers of the aforementioned x87 FPU) is also part of the legacy baggage of x86 / x64, as it was replaced within just two years by the later SSE (which is the basis for all subsequent SIMD extensions from Intel).

In the meantime, however, a lot of code had been written (at the height of the SIMD processing boom), traces of which can still be found today, sometimes even substantial (e.g. it is about 10% compared to the SSE instructions of those disassembled in the 32-bit version of WinUAE — the most famous Amiga emulator).

Similar situation for SSEs, which are by far the most widely used, but moreover the basis of x64 (they are a requirement as well as a recommendation for this ISA, in the SSE2 version), despite the fact that the far more advanced and high-performance AVX (and later) have been arriving and spreading for several years now.

In this case, the decision taken was to maintain support for them, suitably transforming them, revitalising them, and grouping them into an orthogonal and very well organised set of instructions (especially trivial to decode, since there are no prefixes of any kind!), as we shall see in the next article.

16 bits addressing modes

We have already seen in the second article how complicated the decoding of x86/x64 instructions is, and how complicated the part (one byte for the so-called ModR/M, a possible other for the SIB, and followed by further bytes for a possible offset) concerning the possible presence of an addressing mode for an argument in memory is.

In NEx64T, there is nothing so convoluted: the instructions capable of referencing one or more operands in memory are well defined/grouped, and there is only one word (2 bytes; 16 bits) of extension that lists in a simple and precise manner which addressing mode to use among those available.

Since, and as already mentioned at the beginning, the addressing modes present with the 16-bit execution are not supported, the relevant addressing modes (e.g. [BX + SI]) were not implemented either, which were somewhat different from those that were later introduced with the 32-bit mode.

Should they be needed, however, they could easily be emulated at the cost of executing an additional instruction to be used to calculate the 16-bit address, and then using the result in the next instruction.

Conclusions

As can be seen, almost all the legacy of x86 / x64 has been removed from the architecture, leaving only that which was absolutely impossible to do without (in particular, certain segmentation functionalities).

The result is that the NEx64T cores occupy less space because the implementation cost is reduced to a minimum, simplifying not only the decoder, but also the backend that deals with executing instructions.

As anticipated, the next article will take up the innovations that the new architecture brings, focusing this time on the SIMD/vector unit (which is another of its strong points, as we shall also see by taking a well-known example, showing its implementation and comparing it with all the best-known and most emblazoned ISAs).